Dawn of a New Era — Or the Slow Fade of Everything We Took for Granted

How AI is changing the way we think, work, and connect — from someone who builds the interfaces you use every day.

December 2025, on a slow Sunday afternoon, I'm in a coffee shop, and the guy at the next table is hammering away at his laptop — prompting an AI to write his essay. He doesn't read the output. He skims it, hits submit, and goes back to scrolling. The whole thing takes maybe 8 or 10 minutes.

I'm not judging him. I've done the same thing. We all have.

I've been a front-end developer for over a decade. I've designed and built e-commerce experiences for clients across Vietnam, Singapore, France, the US, and the UK. My job has always been the same at its core: understand people, then build something that feels right in their hands. Pixels, animations, micro-interactions — all serving that one moment where a user thinks, "This just works."

But the ground is shifting. AI isn't a shiny tool on the shelf anymore. It's becoming the shelf, the store, the whole environment. And I think we need to have an honest conversation about what that actually means — not just for our careers, but for our brains, our kids, and how we relate to each other.

This is my attempt at that.

Work Is Being Redesigned, Not Just Automated

A few years ago, "AI at work" meant email autocomplete and a chatbot on your landing page that annoyed more people than it helped. Now we're looking at agentic AI — systems that don't just respond to prompts but execute multi-step workflows on their own. Less "digital assistant," more "virtual coworker who never sleeps."

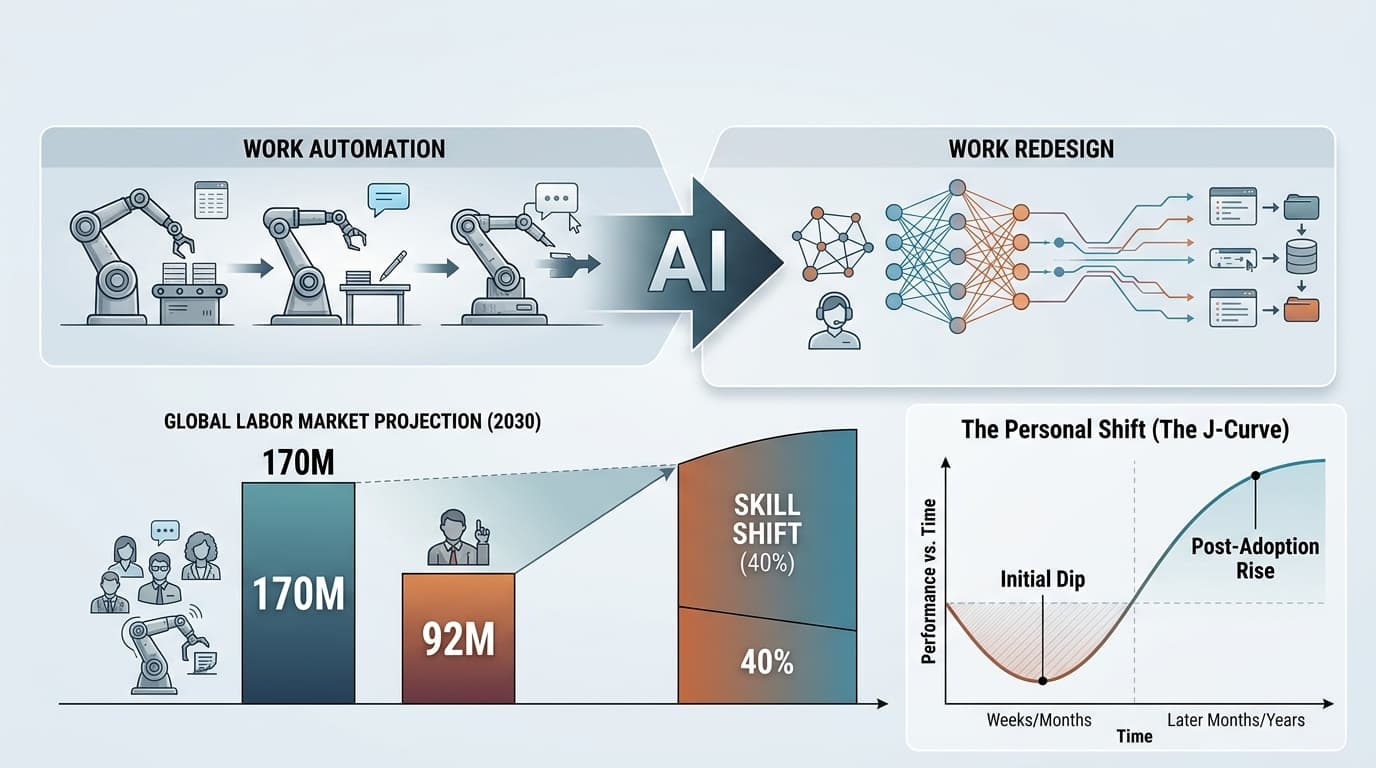

The numbers: by 2030, agentic AI could unlock around $2.9 trillion in annual value in the US alone. The global labor market expects a net gain of 78 million jobs — but underneath that, 170 million new roles created and 92 million displaced. Nearly 40% of existing work skills will need to change.

I've felt this. A client in France once hired me to build a product configurator — weeks of custom UI, edge cases, responsive layouts. Today, a good chunk of that could be scaffolded by AI in an afternoon. My value didn't vanish. It moved. I went from "the person who writes the code" to "the person who knows why this interaction should feel a certain way."

That shift is disorienting. Researchers call it the AI adoption J-curve — a dip in performance when you first integrate AI, before the gains kick in. I lived that curve. The first months of weaving AI into my workflow felt slower, not faster. I kept second-guessing outputs, rechecking generated code, dealing with a weird imposter syndrome where I wasn't sure if the work was even mine.

It got better. But only because I sat with the discomfort instead of ignoring it.

The Most Valuable Skill Now Isn't Technical

Here's what a decade of building interfaces taught me that turned out to be surprisingly future-proof: empathy is a technical skill.

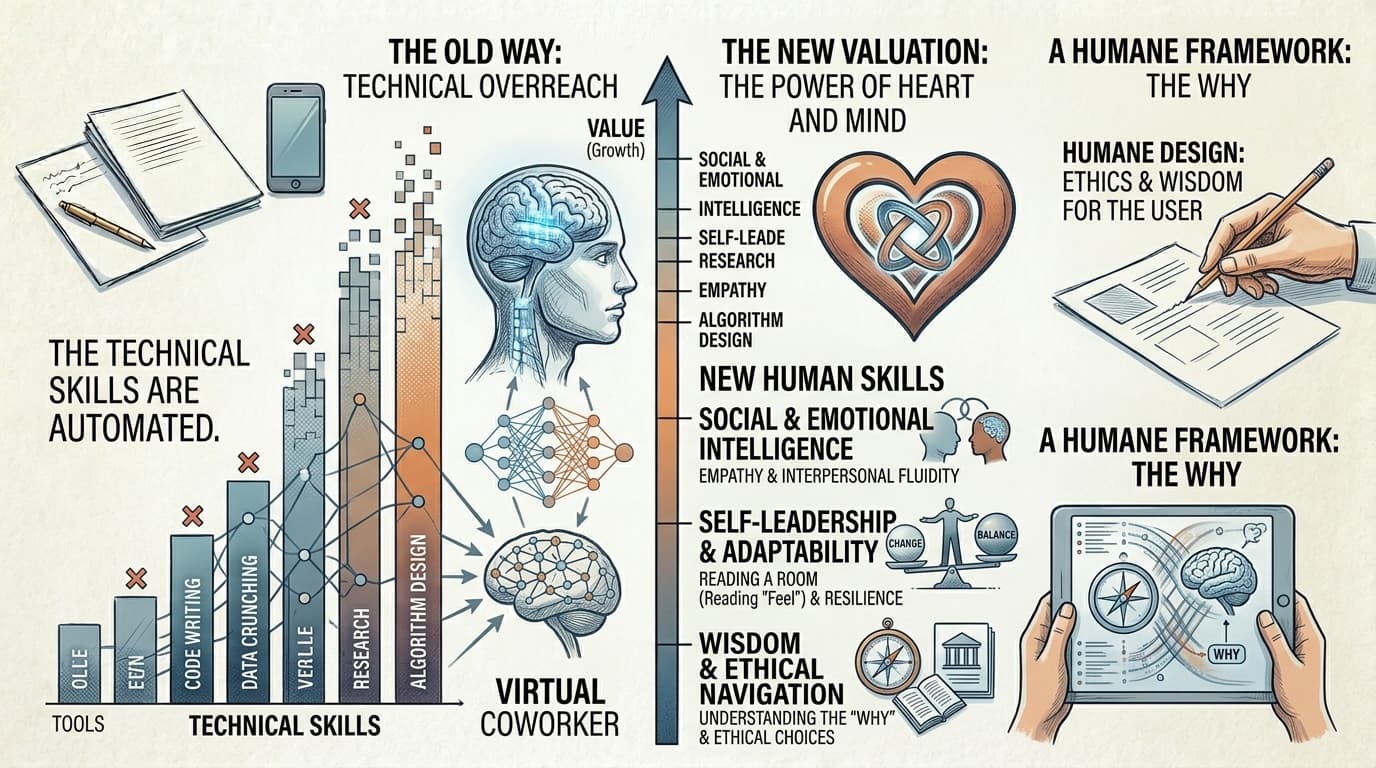

The data supports this. As AI handles more digital tasks — writing, research, data crunching — the skills that stay human are interpersonal ones. Coaching, negotiation, reading a room. Sitting on a call with a client in Saigon who can't quite explain what they want and finding the answer through feel, not logic. That's not something you prompt your way to.

The fastest-growing skill across industries right now is "AI Fluency" — knowing how to direct and evaluate AI. But I'd say that's half the picture. The other half is taste. Knowing when the AI-generated layout is 90% there but feels dead. When the copy is correct but the tone is off. When to override the machine because you understand something about the user that training data never captured.

I work with junior developers who can out-prompt me all day. But when I ask them why they chose a particular pattern, they shrug. The "why" is where human value lives. It's the part we risk losing if we don't pay attention.

The Kids Are Alright — But the Algorithm Isn't

This is where it gets personal for me. Not as a developer, but as someone watching the next generation grow up inside these systems.

Gen Alpha — kids born after 2012 — are the first real AI natives. They don't see AI as technology. It's like electricity: just there. Gen Z is the "Co-pilot Generation," using AI for school, side hustles, creative projects. Fearless and curious. I respect that.

But there's a cost.

AI-driven social media feeds prioritize emotionally intense content. For a developing brain, this creates what researchers call a "recursive mood amplification cycle." Anxiety triggers the algorithm, the algorithm serves more anxiety-inducing content, the loop tightens. Depression, body image issues, constant comparison — amplified by systems built to maximize engagement, not wellbeing.

As someone who's spent years designing for engagement — countdown timers, urgency cues, "only 2 left" nudges — I know how the machine works. And I think our industry needs to reckon with what we've optimized for, and who's paying the price.

Building things that feel good isn't enough. We need to ask: good for whom? At what cost?

The Memory Problem

Here's the part that actually scares me.

There's research on something called cognitive atrophy — when we outsource thinking to AI, the brain pathways we'd normally use start weakening. One MIT study found AI users worked 60% faster, but cognitive load dropped 32%. And 83% couldn't remember a passage they'd just "written" with AI.

I've caught myself here. I'll use AI to draft a technical proposal, ship it, then blank during the follow-up call because the reasoning never went through my brain. The words had my name on them. The thinking didn't.

There's a creative angle too. When groups all use AI for ideation, outputs converge toward the same median. The odd, unexpected, distinctly human ideas get ironed out. For a designer, that's a problem. Design is about divergence — seeing what others don't. If we all land on the same AI-suggested palette, layout, and copy, we get a web that looks identical everywhere.

(You could argue we were already headed there. AI just sped it up.)

Different Generations, Different Trust Levels

How you feel about AI depends a lot on when you were born.

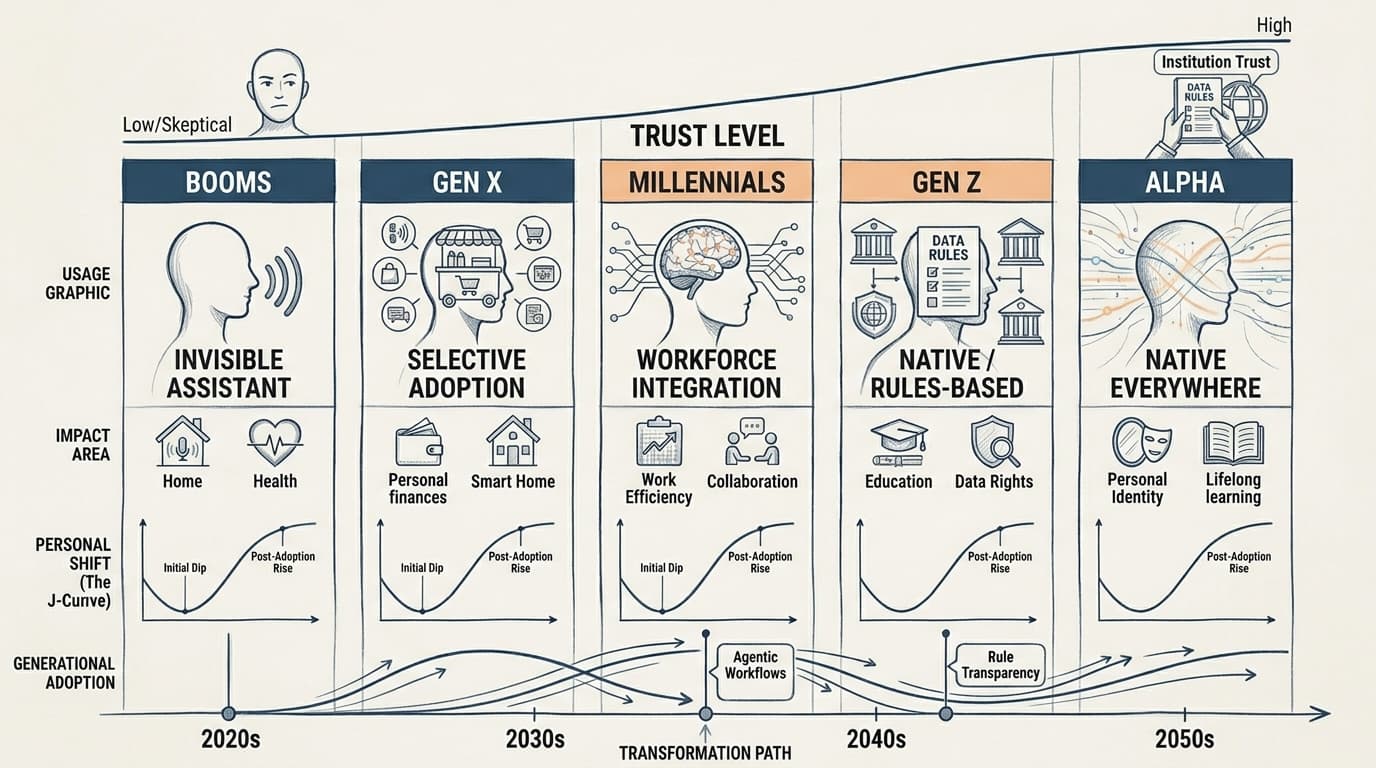

Millennials — my generation — are the main professional users. We're pragmatic. We adopted AI to do more with less, maybe get some work-life balance back. Gen X picks it up where convenient, ignores it where it feels intrusive. Boomers engage mostly when AI is invisible — baked into voice assistants or auto-suggestions they don't even register as "AI."

On the younger end, Gen Z and Alpha don't have trust issues with AI itself. They have trust issues with the institutions deploying it. They want transparency, fairness, control. They're fine with AI being everywhere — they just want to know the rules.

This matters for what I do every day. When I build an experience for a Vietnamese e-commerce client targeting young shoppers, the AI needs to feel like a collaborator. When I'm designing for an older UK audience, the AI should be invisible — helpful without being obvious. Same technology, totally different design approach based on generational trust.

That kind of nuance? AI can't navigate it. Not yet.

The World Isn't Playing by the Same Rules

Quick detour into geopolitics, because it directly affects the products we ship.

The EU has the AI Act — risk categories, transparency mandates, human rights front and center. Principled, but heavy. Anyone who's dealt with GDPR compliance for a European client knows the friction. Now imagine that, multiplied.

ASEAN, where I live and work, takes the opposite approach: decentralized, voluntary, flexible. Great for speed and investment. Not so great when rules differ wildly from country to country and you're building cross-border experiences.

China is building a fully sovereign AI stack — chips to models — to insulate from US export controls. Models like DeepSeek proved you don't need Western-scale hardware to compete.

For developers, the takeaway is simple: context is everything. The AI features you ship in Ho Chi Minh City might be illegal in Brussels. The data you collect in Singapore might not cross borders the same way next year. The global internet is fragmenting, and our work sits right on the cracks.

So Where Does That Leave Us?

I don't have a neat answer. Anyone who does is selling something.

But here's what I've landed on after a decade of building digital products and a solid stretch of reckoning with what AI means for my craft:

The value is in what you can think, not what you can produce. AI generates a landing page in seconds. It can't tell you if that page makes someone feel understood. That's still on us.

Protect your ability to think without AI. I've started doing things the hard way on purpose — sketching by hand, writing first drafts solo, working through problems on paper. Not because I'm anti-AI. Because I don't want to lose the muscle. Escalators exist. Your legs still need exercise.

Design with conscience. If you build interfaces, you shape behavior. Every dark pattern, every engagement loop, every "personalized" feed quietly messing with a teenager's self-image — those are choices. We can make different ones.

Stay curious, stay humble. The tech moves. The job titles change. The only constant is your willingness to learn, unlearn, and relearn.

We’re (already) at a crossroads. Whether we see genuine progress or a slow cognitive decline depends on the choices being made right now—in boardrooms, codebases, and classrooms. It's happening wherever people are prompting machines to do the thinking for them

I'm staying in the conversation. I hope you will too.

After a lifetime of polished pixels and client-approved code, I’m finally shipping some of my own thoughts. Consider this the first of many — won't be the last!